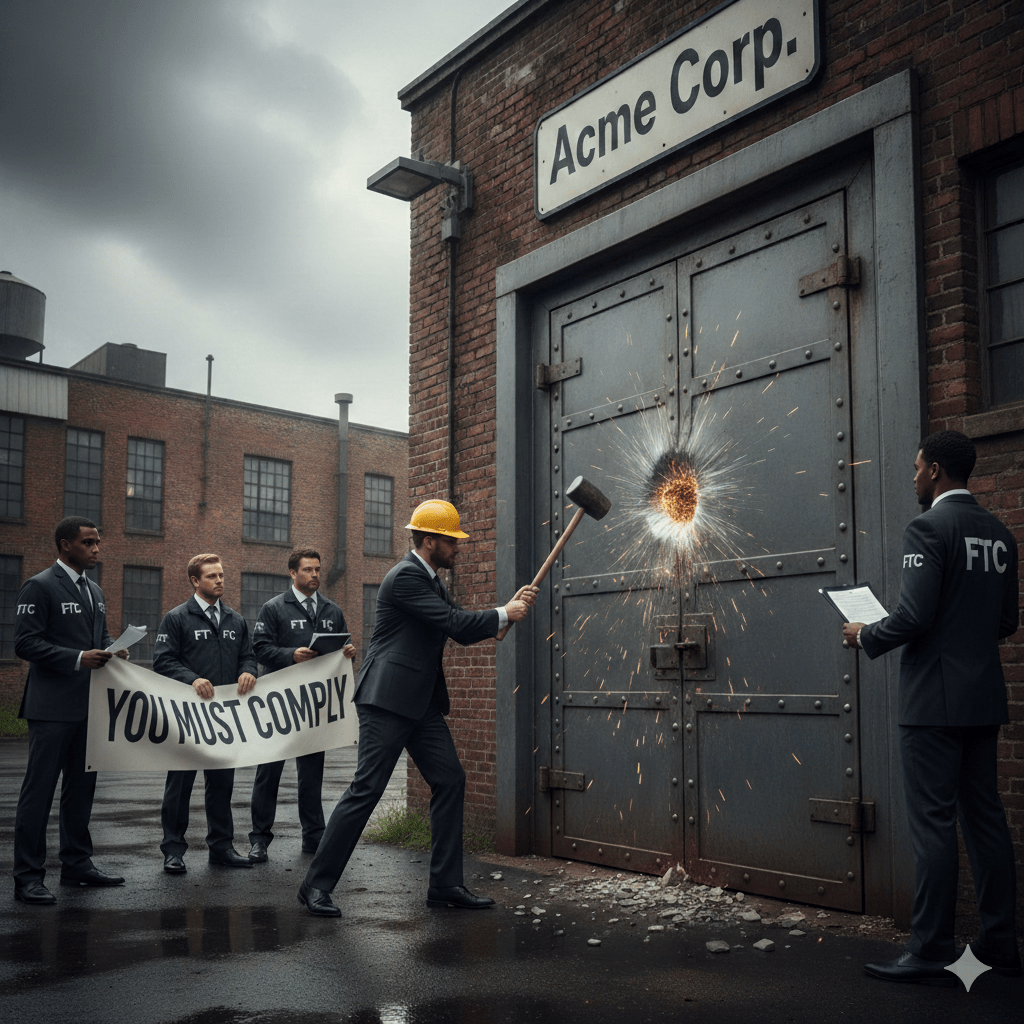

A bit older news, but important for companies exploring AI. If you plan to use AI-assisted chatbots, ensure your data privacy and cybersecurity systems, processes, policies, and procedures are in order.

On September 11, 2025, the FTC announced 6(b) orders to seven major tech companies—Alphabet, Character Technologies, Meta, OpenAI, Snap, and X.AI—to investigate the impact of AI-powered companion chatbots on children and teens. FTC 6(b) orders are compulsory, administrative demands issued under Section 6(b) of the FTC Act. The September 11 orders focus the inquiry on safety, data handling, monetization, and potential negative effects of AI, marking a significant step toward federal regulation of consumer-facing AI products.

Key Aspects of the FTC 6(b) Orders:

- Focus Area: The inquiry targets how generative AI chatbots act as companions for children and teenagers, particularly assessing safety, data usage, and psychological impacts.

- Targeted Information: The FTC is seeking detailed information regarding:

- Data Handling: How user input data is processed, stored, and used.

- Safety Measures: Methods for pre- and post-deployment monitoring for harmful content.

- Monetization: How user interaction and engagement are monetized.

- Targeting: Strategies regarding advertising and user engagement for minors.

- Regulatory Authority: Section 6(b) of the FTC Act allows the commission to conduct studies without initiating formal law enforcement, aiming to gather information to understand industry practices before potential enforcement actions.

- Context: This follows increasing scrutiny of AI, including a prior January 2025 report on partnerships between cloud service providers and AI developers.

The benefit of these 6(b) orders is that they provide companies with a potential roadmap for the steps, methods, or processes they are expected to use to comply with existing data privacy and cybersecurity requirements.

According to Peter H. Gregory in his IAPP Artificial Intelligence Governance Professional study guide, “Organizations with low process maturity, particularly in systems development and IT service management, will face significantly higher risks of problems with their AI systems.” Simply put — if you are not evaluating your data privacy and data security obligations and confirming your current capabilities, you are already behind the curve when it comes to implementing AI into your services or tools.

The recent actions demonstrate the FTC’s proactive approach to applying existing consumer protection authorities to emerging AI technologies, specifically focusing on privacy and safety risks. Now is a great time to start.

Troutman Amin LLP is available to assist in developing the data privacy policies and processes you are required to have in place.